Data Pipeline Automation & Orchestration

Modern financial institutions depend on continuous data flows to support reporting, analytics, and AI-driven decision-making. Manual or poorly managed data pipelines often lead to delays, failures, and inconsistent data delivery—undermining trust in analytics outputs. At Datageny.com, our Data Pipeline Automation & Orchestration services help financial organizations automate the movement, transformation, and delivery of data across systems. By eliminating manual intervention, we ensure data pipelines run reliably, efficiently, and at scale.

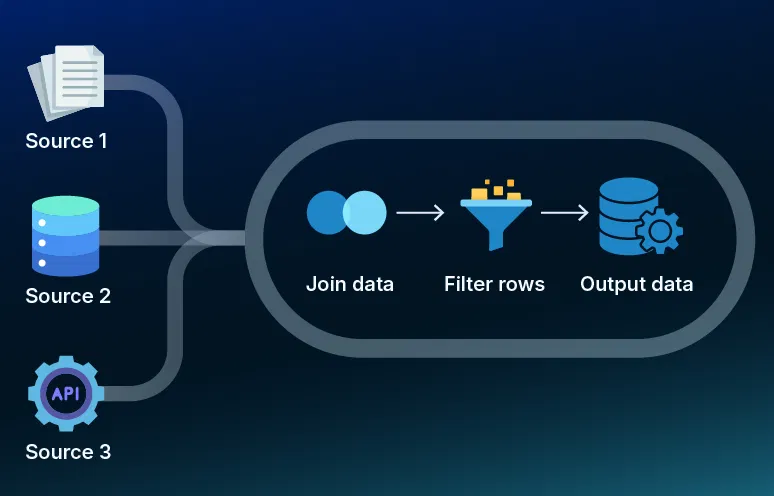

Orchestrating Complex Financial Data Workflows

Financial data environments are inherently complex, involving multiple sources, dependencies, and processing stages. Without orchestration, pipeline failures can cascade across systems and disrupt analytics workflows.

Our data orchestration solutions manage complex dependencies between ingestion, transformation, validation, and delivery processes. We design workflows that coordinate batch and streaming pipelines, ensuring tasks execute in the correct sequence and recover automatically from failures.

Improving Reliability and Performance of Data Pipelines

Pipeline reliability is critical for financial analytics, where late or inaccurate data can impact regulatory reporting, risk models, and customer-facing systems. Our automation frameworks are designed to maximize uptime and performance.

We build scalable data pipelines with fault tolerance, retry mechanisms, and performance optimization built in. These pipelines adapt to changing data volumes and processing demands while maintaining consistent performance.

Supporting Real-Time and Batch Data Processing

Financial organizations require both real-time insights and historical analysis. Supporting these workloads simultaneously requires flexible and well-orchestrated data pipelines. Our data pipeline automation services support real-time streaming data for use cases such as fraud detection and transaction monitoring, alongside batch processing for reporting and forecasting. We design architectures that seamlessly handle both processing modes within a unified orchestration framework.